Simpleton: privacy-aware web analytics made with Nginx and GoAccess

Hacker News is crowded with articles about blog owners making their custom web analytics1. I thought it looked like a fun weekend project. Let me show you Simpleton Analytics.

TL;DR visit the project repository, and you can have your analytics set up in a few minutes.

My requirements were clear. Privacy, price, time. I want to know the bare minimum about my visitors, no cookies, no session tracking, no sharing with 3rd parties. It had to be cheap, running on the smallest VPC instance. It had to be fun to make and the implementation needed to fit into a weekend.

“Illustration” by Ljubica Petkovic

Saturday

I’ve started researching all the possible technologies, and I quickly settled on the combination of storing access logs and then processing them with GoAccess. No database because installing it, designing the schema for tracking requests and implementing data access in some HTTP server sounds like fun, but it would at least tripled the effort I wanted to put in.

I almost ended up writing a custom go HTTP server though. There is a neat golang utility called certmagic which autonomously handles obtaining Let’s Encrypt certificates. Certmagic is used in the caddy server. Again, in the name of iteration and to keep the scope within one weekend, I decided to use caddy directly. But Caddy 2 is only using JSON logs that are not compatible with GoAccess2. Stubbornly trying to find a workaround for this issue took me almost the whole day before I gave up.

Sunday

I knew that if I kept banging my head into the Caddy wall, I can say goodbye to the weekend time box. So I settled for a more boring solution: Nginx. Ngnix was created ten years before Let’s Encrypt3, and their integration is painful. You have to install more dependencies, and in the end, you run a script that modifies your existing config files 😱4. There are virtually thousands of different blog articles describing how to do it.

After adding a trivial fetch() call to this blog site and fiddling a bit with the referrer header (I had to pass document.referrer to the call), I could see all page views nicely in the logs.

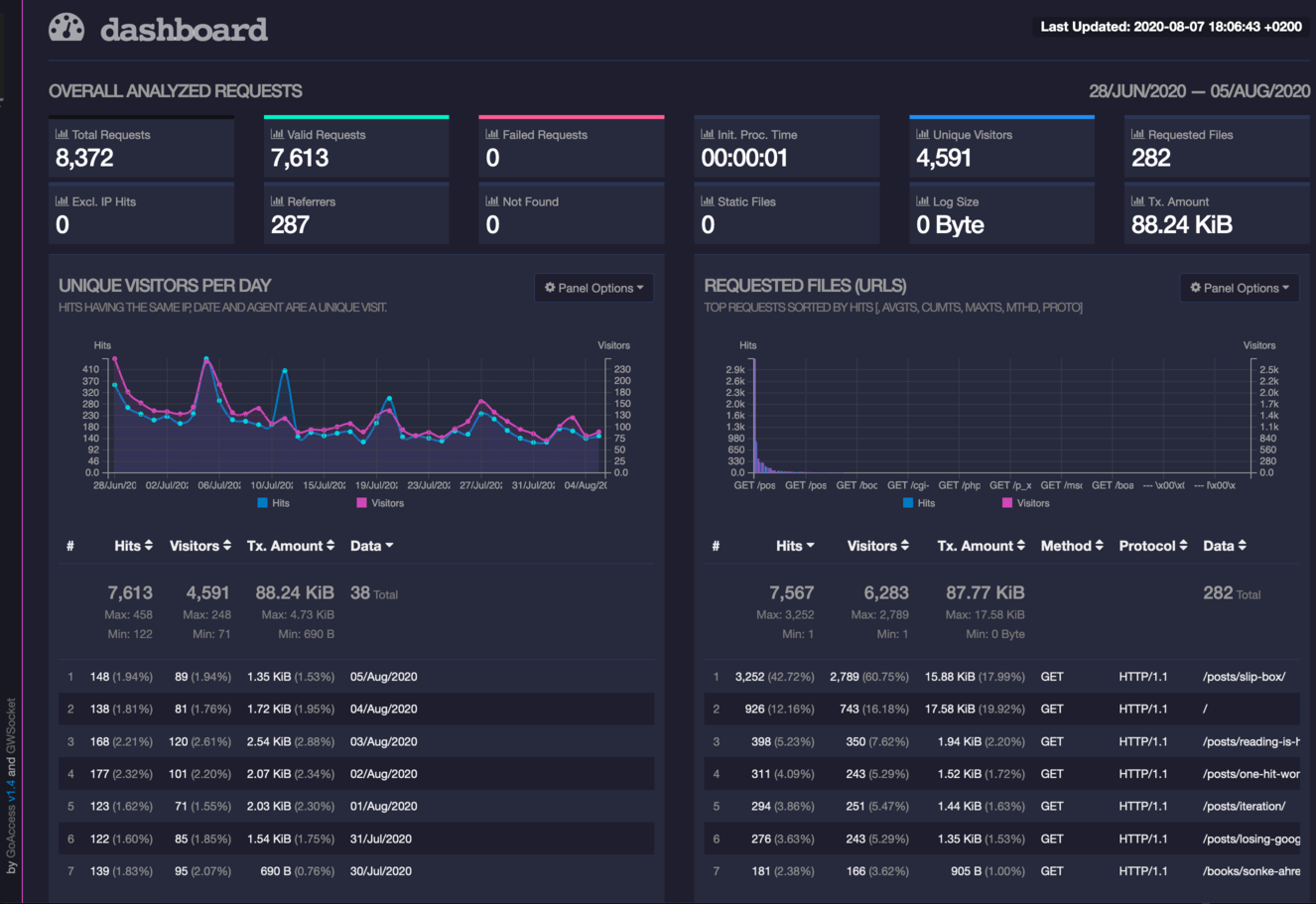

Now I just needed to process the access logs with GoAccess. That was a pleasant surprise. GoAccess worked well out of the box. Both the terminal and the HTTP versions are great. An added bonus is that the terminal version shows you the page views in real-time.

That concluded the weekend project. Simple analytics that worked great. I even wrote an article about my observation of how AdBlockers hide the majority of visitors from web analytics.

Two weeks later

What is the default log retention for Nginx? You guessed it, two weeks. After two weeks, I started noticing that older logs are disappearing. Now I had a chance to learn about logrotate, a utility that runs every midnight on all Ubuntu distributions. It chunks your logs into day-long files, and it deletes these after some time.

By default, it adds a suffix number to the log file and after two days compresses them. Day one you’ve got access.log day three access.log, access.log.1, access.log.2.gz. I’m not a fan of this default behaviour, and so I changed the configuration to use timestamps. All glory to access.log-20200806.gz format.

Two months later

All the previous work was done by manual typing in commands and changing configuration files. I’ve created a snowflake. After seeing that the server works well, I thought it’s a shame that nobody else can use it. So I wrote an ansible provisioning script that can install the analytics automatically. That spawned another weekend project that you can benefit from right now. Ansible wasn’t the coolest choice, but it fitted the requirements (time, cost) the best.

Now you can spin up your instance (I use $5 Digital Ocean) and try it for yourself. You only need five bucks and a domain.

-

The combined log format that GoAccess uses is out of scope for Caddy 2 GitHub issue ↩︎